In today’s ever-evolving technological landscape, there are infinite possibilities to delve into and interact with Bible verses. I myself have recently embarked on a personal endeavor, creating a Q&A platform specifically tailored to the King James Version (KJV) of the Bible.

Extracting the KJV Bible

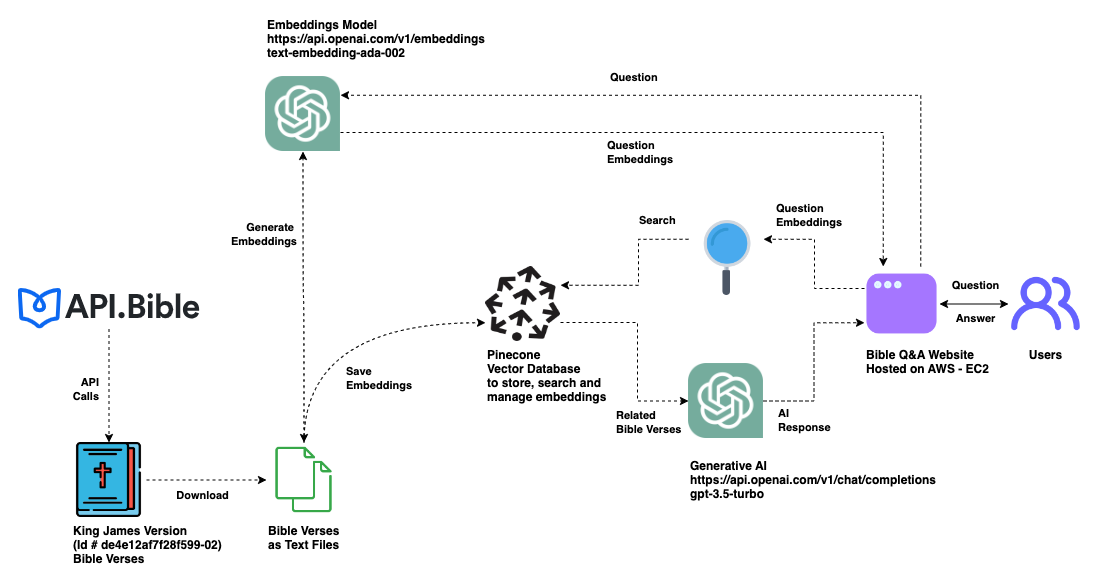

The first step was to acquire the content. Thanks to the Bible API, extracting the full text of the KJV Bible was a straightforward process. This API offers a structured format of the Bible verses, making the extraction process seamless.

Note: Bible verses taken from the King James Version (KJV) and sourced from api.bible (bible id: de4e12af7f28f599-02).

Generating Embeddings with OpenAI

After obtaining the Bible verses, the next challenge was understanding and contextualizing them. I employed OpenAI’s text-embedding-ada-002 model. This model, renowned for its capabilities, transforms textual data into embeddings - numerical representations of the text that capture the essence and context of the content.

Storing Embeddings in Pinecone

Quickly retrieving relevant embeddings was crucial to creating a responsive Q&A interface. Pinecone, a vector search service, provided the solution. After generating embeddings from the Bible verses, I stored them in a Pinecone index. Pinecone index ensured efficient and quick retrieval during user interactions.

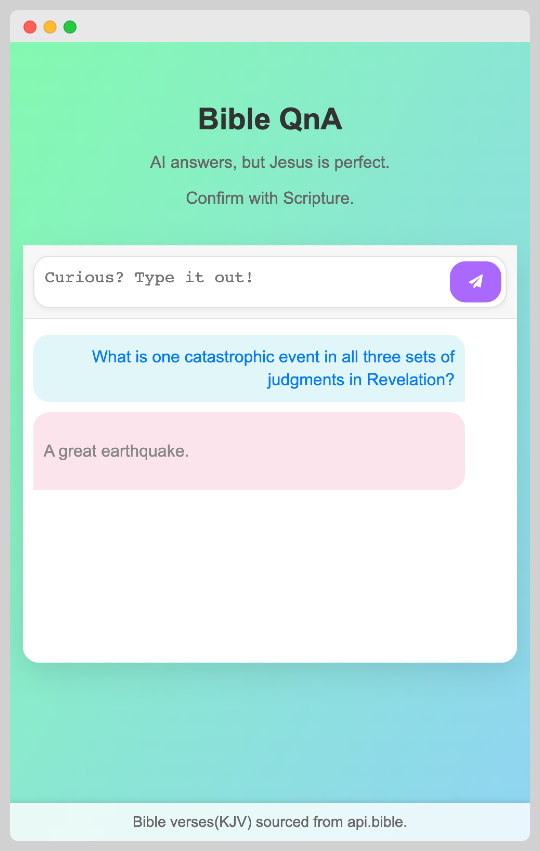

Creating the Q&A UI with Python Flask

With the backend prepared, it was time to focus on the user interface. Python’s Flask framework was the tool of choice. Flask, known for its lightweight nature and flexibility, was perfect for setting up a simple yet efficient Q&A interface.

Leveraging OpenAI for Response Generation

When users posed questions, the system needed to provide meaningful responses. By forwarding the user’s question to OpenAI, the model via LangChain’s Pinecone vector store would sift through the stored embeddings in Pinecone, corresponding to each Bible verse. This would pinpoint verses with content most relevant to the question.

Displaying Results

Finally, once OpenAI returned the best-fit responses derived from the embeddings, they were displayed on the Flask app’s Q&A interface. Users could then interact in real-time, querying and receiving insights from the Bible.

Efficient Deployment with AWS Community Builder’s Credit

Taking advantage of the AWS Community Builder’s Credit, which provides a yearly $500 credit to developers, I was able to deploy multiple projects without incurring any direct costs. This credit allowed me to host the Bible Q&A app on an AWS EC2 instance and power my own Jenkins server, which has been instrumental in managing the deployments of my hobby projects.

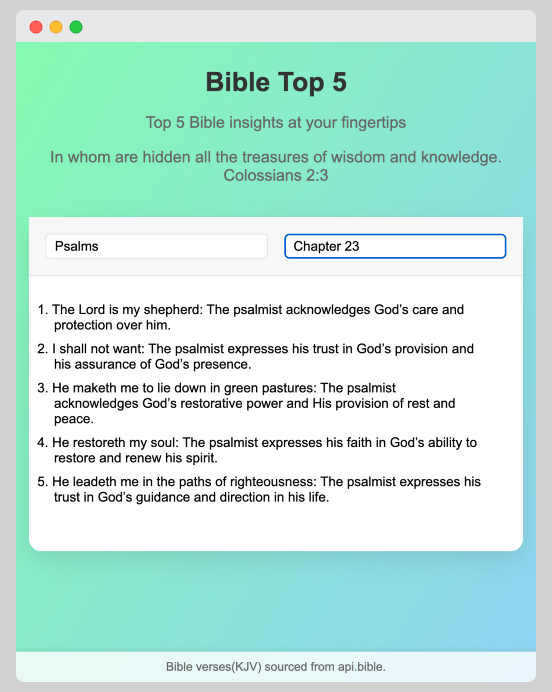

One notable application that benefited from this credit is BibleTop5. The platform delves into the Bible’s vastness to generate insightful summaries, making the scripture more accessible and engaging for its users. By leveraging the AWS Community Builder’s Credit, I could ensure smooth deployment and maintenance of BibleTop5, illustrating what’s achievable when equipped with the right resources. Thank you, AWS!

In short, combining OpenAI’s powerful embedding model, Pinecone’s efficient storage, and Flask’s flexibility resulted in an interactive and enlightening Bible Q&A application. It is a testament to how technology can enhance our understanding of Bible verses, bringing them closer to the modern user.