In natural language processing (NLP), embeddings are vital in converting text data into a format that machines can understand. These dense vector representations of text capture the semantic meaning and are essential for various NLP tasks, including text classification, sentiment analysis, and conversational AI.

Pinecone is a vector database that stores and retrieves embeddings efficiently. It is optimized for large-scale machine learning applications, offering fast and accurate similarity searches. Pinecone is particularly well-suited for applications requiring real-time retrieval of similar items based on embeddings.

OpenAI is a leading AI research organization that offers powerful language models for generating embeddings. OpenAI’s models are trained on extensive data and can produce high-quality embeddings that encapsulate rich semantic information.

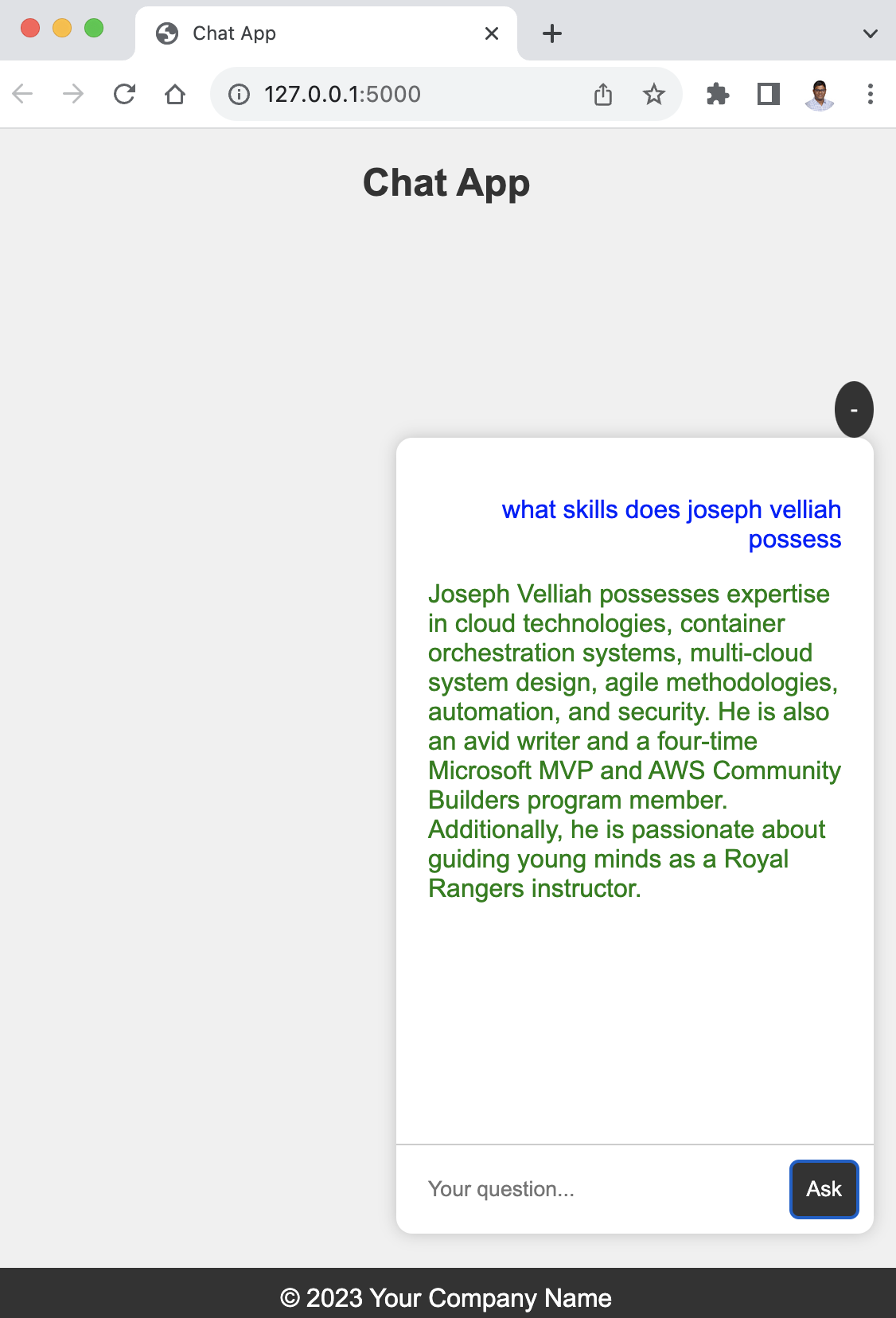

The Pinecone-OpenAI Project offers a comprehensive solution for generating embeddings from custom text data using OpenAI, storing them in a Pinecone index, and building a conversational bot for efficient retrieval and interaction. The project includes a script for ingesting and processing data and a Flask web application for conversational retrieval using the generated embeddings.

The ingestion script loads custom text data from a specified directory, splits the documents into smaller chunks, generates embeddings for each chunk using OpenAI, and stores the embeddings in a Pinecone index. The Flask web application provides an interface for a conversational bot that retrieves relevant responses based on user input using the embeddings stored in the Pinecone index.

This project is highly customizable and can be easily deployed in different environments using Docker. By leveraging the capabilities of OpenAI and Pinecone, it delivers high-quality embeddings and fast retrieval, making it an ideal solution for applications requiring real-time conversational retrieval based on custom data.

Explore the Pinecone-OpenAI Project on GitHub for more details and comprehensive installation and usage instructions.

After detailing the implementation using Pinecone, it’s worth noting that there’s an alternative approach using the Milvus Open Source Database. Just as with Pinecone, Milvus allows us to efficiently store and manage embeddings, but with its own set of features and advantages. In this version of the project, the embeddings are saved directly in a Milvus collection. I encourage readers to explore both and determine which solution best fits their needs. As always, I’m here to answer any questions and provide further insights.